Oscilloscope technology advanced rapidly from its early 19th Century roots through the triggered sweep and digital/flat-screen transformation in the 1980s. The trend has accelerated. Bandwidth and sampling rates have expanded exponentially. Additionally, new features and measuring and display capabilities have emerged.

In this context, one wonders what lies ahead. The major oscilloscope manufacturers maintain robust research and development departments, with many theoreticians and engineers striving to introduce enhanced instruments.

To get a sense of where all this is going, we queried major oscilloscope manufacturers regarding innovations down the road. Among them was Tektronix. Says Gary Waldo, Product Planner, Core Real Time Scopes, Although the focus is often on scopes themselves, probing is a significant source of frustration and great opportunity for creative scope engineering. Engineers demand new and innovative approaches to connectivity as systems get smaller. Probes must rise to meet performance demands: higher bandwidth, lower noise, and lower capacitive loading. We’ve recently introduced optically isolated probes, which we call IsoVu, one of the biggest advances in probing technology in recent history. IsoVu probes use optics to provide high isolation and high common-mode rejection.

Analysis will continue to expand beyond basic signal visualization. Most scopes in the midrange can acquire almost all signals of interest. But just seeing a signal isn’t good enough anymore. Engineers want the scope to provide higher levels of information about the signal — whether it’s power analysis, decoding serial buses, signal integrity measurements, or much more advanced analysis like jitter.

Scopes have become more upgradeable. In the past, bandwidths and record lengths had to be specified at the time of purchase. You got what you got. Now scopes are designed to be upgradeable, often in the field. This provides more flexibility to meet changing workloads and extends the life of the instrument. There will probably be further advances in the area of adding capacities of these instruments to meet whatever needs arise.

Historically, scopes have been focused on the time domain. But some problems are easier to solve in the frequency domain, especially if you are able to tie your frequency domain events back to related time domain phenomena. As such, we’re seeing scopes move way beyond the traditional FFT by providing much more elegant and advanced frequency domain analysis with some scopes even providing an integrated spectrum analyzer. Tek has done some pioneering work on incorporating frequency domain analysis in scopes: for example the Mixed Domain Oscilloscope.

Usability is important. It may not sound like much, but if we can save the engineer a few seconds on each interaction with the scope by providing a simpler, more intuitive UI, that time really adds up. Many engineers use scopes in spurts, so it is important to minimize the “re-learning curve”. And beyond the time savings, the frustration level is kept down enabling them to focus on the problem at hand rather than focusing on how to drive the scope.

We’ve recently introduced scopes that use a smart-phone-like interface. Scopes are precision instruments that have to measure thousandths of volts and billionths of seconds, so making them operate like consumer products is quite challenging. However, trends in consumer products will continue to find their way into oscilloscopes.

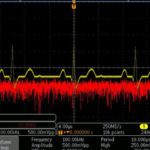

Higher resolution and lower noise. Digital oscilloscopes have featured eight-bit vertical resolution for the last 15-20 years, as a lot of emphasis was put on speed. But signals have shrunk significantly from the days of TTL logic, to support much higher data rates. Power efficiency requirements and battery-powered devices are also leading to lower and lower voltage signals. So recently scopes have progressed to 12-bit ADCs or even higher.

This technology shift has allowed engineers to make more accurate measurements, especially on low-voltage signals. Of course, more vertical resolution only matters if you have a low-noise acquisition system that doesn’t add noise to the measurement. Low noise enables you to bring the signal out of the noise and make a more accurate measurement.

John Marrinan, a UK Tektronix field application engineer, has this to say: What’s the future for scopes? Your guess is as good as mine. What I certainly think you will continue to see more integration of boxes on your lab bench into a single product, and I believe that this product will fundamentally be a scope. There will be more miniaturization and improved performance in modular-based scopes. And there will be a greater influence by the consumer on the test and measurements world. By this I mean smart-phone-type interfaces on scopes, instrument control through tablets, and more customization with widgets and apps.

At the highest end of research things will stand still and there will be no change at all in my opinion…they’ll just want higher bandwidths, faster sample rates and more accuracy like always.”

While we’re looking ahead, we should consider the future from a different perspective, not just that of instrumentation, but looking at the electrical and electromagnetic waves themselves. Oscilloscope manufacturers have for years worked to realize greater bandwidth. This is an expensive undertaking, though quite doable. But have you wondered whether there is some maximum frequency that cannot in principle be exceeded?

Frequency and wavelength are inversely related. It has been theorized that wavelength cannot be less that the Planck length, so this would mean an upper limit on frequency. Actual quantum graininess has been measured at orders of magnitude below the Planck length, which applies only to particles that have mass. This particular topic is speculative and controversial. Photons are said to have mass only if they are at rest. A moving photon, however, has energy, which is one aspect of mass.

A 2009 paper, cited in arXiv.org titled Testing Einstein’s special relativity with Fermi’s short hard gamma ray burst GRB090510, stated that gamma ray bursts are the most powerful explosions in the universe and probe physics under extreme conditions. Gamma ray bursts divide into two classes, of short and long duration, thought to originate from different types of progenitor systems. The physics of their gamma-ray emission is still poorly known, over 40 years after their discovery, but it may be probed by their high-energy photons.

The photon sets limits on a possible linear energy dependence of the propagation speed of photons (Lorentz-invariance violation) requiring for the first time a quantum-gravity mass scale significantly above the Planck mass.

Considering in this context the Planck length as the smallest unit of spatial extension, a wave having that length would exhibit a frequency of 6.2×1034 Hz. By way of comparison, a typical gamma ray frequency could exceed a mere 1019 Hz, still many orders of magnitude slower and yet well beyond the bandwidth of our highest-priced oscilloscopes.

Moreover, because energy and frequency of a photon are proportional, the highest frequency as set by the smallest wavelength would require vast amounts of energy, depending upon the actual amount of radiation involved. Just a single photon would have to measure 41 J of energy, which is 2.56×1020 eV.

Besides measuring and displaying extremely high frequency waveforms, oscilloscopes of the future may play a role in the development and subsequent operation of artificial intelligence. This need not be a hardware-based instrument. The software-based oscilloscope is currently in an early stage of development. Most models do not really compete with bench models. But in a post human-dominated world, artificially intelligent entities would undoubtedly have the ability to design and install software in their enormous computer networks that would be capable of realizing virtual oscilloscope instrumentation that could synthesize and modify what Hugh Everett termed the universal waveform in his many worlds interpretation of quantum reality.

Leave a Reply

You must be logged in to post a comment.